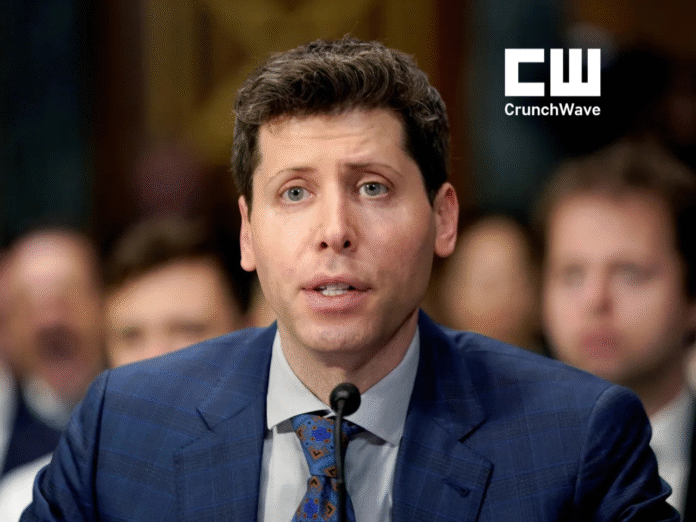

As artificial intelligence evolves at unprecedented speed, the world is divided between excitement over its potential and anxiety over its consequences. One of the most influential voices trying to bridge this divide is Sam Altman, CEO of OpenAI — the company behind ChatGPT, DALL·E, and some of the most powerful AI systems ever created.

In his testimony before the U.S. Senate Judiciary Subcommittee, Altman delivered a clear message:

AI is powerful enough to transform society — and dangerous enough to require global rules.This hearing marked a pivotal moment for AI governance, setting the tone for how world powers might regulate an emerging technology that could reshape economies, politics, and everyday life.

From Silicon Valley Entrepreneur to the Face of Global AI Governance

Before becoming the public face of modern artificial intelligence, Altman built his reputation as a founder, investor, and technologist.

- Born in Chicago (1985)

- Stanford computer science dropout

- Co-founder of Loopt, acquired by Twitter in 2014

- Former President of Y Combinator

- Co-founder of OpenAI in 2015

Under his leadership, OpenAI scaled rapidly, producing milestone technologies:

- GPT-2 (2019): the first truly alarming glimpse of AI-generated content

- GPT-3 (2020): the breakthrough that stunned even skeptics

Altman has since become both an evangelist and a critic of the technology he helped unleash — making his voice one of the most important in global AI discourse.

A Hearing That Started With an AI Mimic — And a Warning

The Senate hearing opened with a striking demonstration:

A realistic AI-generated voice mimicking Senator Richard Blumenthal reading AI-written text.

“If you were listening from home, you might have thought that voice was mine,” Blumenthal said. “But it was not.”

This set the stage for the core issue Altman addressed:

AI is powerful, accessible, and increasingly indistinguishable from human output.

And without guardrails, it can:

- spread disinformation

- manipulate voters

- automate jobs at scale

- reshape global geopolitics

- challenge copyright and creativity

- undermine trust in digital content

Altman’s Core Recommendation: License the Most Powerful AI Models

Altman urged governments to regulate AI the same way they regulate:

- pharmaceuticals

- nuclear technology

- aviation

- financial systems

His proposals included:

1. Licensing requirements for advanced AI models

Only approved entities should train large-scale systems.

Licenses can be revoked if rules are violated.

2. Mandatory testing before AI models are deployed

To prevent harmful capabilities from reaching the public.

3. Clear labeling of AI-generated content

So users can distinguish real from synthetic media.

4. A global regulatory framework

A worldwide AI oversight organization — similar to the IAEA — to prevent an arms race.

5. Transparency and accountability for AI companies

Where safety must take priority over competition.

Altman noted that the U.S. must lead in regulation, but emphasized Europe’s progress with the upcoming EU AI Act, which aims to impose the world’s first comprehensive AI safety rules.Why This Matters: The AI Boom Is Outrunning the Rules

AI today is not the AI of 5 years ago.

It writes code.

It mimics voices.

It generates photorealistic images.

It can persuade, influence, automate, and distort reality.

Lawmakers at the hearing feared a future where tech companies rush ahead without guardrails — similar to the early Facebook era that enabled misinformation and election manipulation.

Altman’s testimony finally crystallized the urgency:

The world must regulate AI before AI reshapes the world.

Crunch Insight: What’s Really at Stake?

Here’s the deeper layer industry insiders are watching:

1. AI could destabilize job markets faster than any previous wave of automation.

White-collar workers — once considered “safe” — may face displacement.

2. Without rules, bad actors could weaponize AI.

Deepfakes, phishing, synthetic propaganda — all at industrial scale.

3. The geopolitical race is intensifying.

The U.S., China, and Europe are fighting for AI dominance.

Regulation will influence who wins.

4. OpenAI’s call for regulation is also strategic.

By supporting licensing, OpenAI could set the bar so high that only a few major players can compete.

It is both a moral stance and a competitive moat.

5. Global coordination will be extremely difficult.

Countries differ in ethics, politics, and national interests.

AI governance may become a defining geopolitical challenge of our time.

The Road Ahead: Can the World Agree on AI Governance?

The U.S. Congress now faces a historic dilemma:

How to create rules that protect society without slowing innovation.

Europe is moving fast with the AI Act.

China is building its own heavy-handed frameworks.

The U.S. is still debating.

Altman’s testimony forced lawmakers to confront the reality that AI safety is not an abstract risk — it’s a present-day challenge.

And while the world debates, AI continues to evolve every month.

Crunch Verdict

Sam Altman’s call for global AI regulation is more than a warning.

It’s a recognition that the technology capable of transforming humanity must be governed with equal force.The world is entering an era where:

AI can build — or break — trust, economies, and societies.

The question now is whether global leaders can act fast enough.